Despite 18% of the world’s population, Africa holds less than 1% of global data center capacity. For founders in Lagos, Nairobi, and Cairo, the AI race is not a battle of algorithms — it is a battle of silicon and electricity.

The global AI industry is usually framed as a competition between algorithms, talent, and venture capital. But for the engineer training a language model from a co-working space in Nairobi, or the fintech founder deploying a fraud-detection system in Lagos, the bottleneck is more immediate.

It is access to GPU compute, stable power, and affordable data infrastructure. These are physical constraints — and they are disproportionately severe across Africa.

This is the story of the compute divide — not as an abstraction, but as a concrete, measurable, structural barrier. This shapes which companies get built, which problems get solved, and which regions participate in the AI economy. And it is also a story of how that divide is beginning to close.

The African AI Compute Divide: Key Statistics

The current landscape of AI compute in Africa is defined by a stark contrast between the continent’s human potential and its hardware limitations. Here is a breakdown of the numbers shaping the industry today:

| Metric | Statistic | Context |

| Global Data Center Capacity | <1% | Represents the significant infrastructure gap compared to North America, Europe, and Asia. |

| Global Population Share | 18% | Highlights the massive disconnect between human capital/data generation and processing power. |

| High-End Hardware Cost | $40,000+ | The unit price of a single NVIDIA H100 GPU, creating a massive barrier to entry for local startups. |

Why These Numbers Matter

- Infrastructure Scarcity: With less than 1% of the world’s data centers, African startups are forced to host their models on overseas servers, incurring high latency and “egress” fees.

- The Scalability Barrier: When the price of one industry-standard chip ($40k) exceeds the entire pre-seed funding of some local startups, innovation is restricted to those with deep pockets or international backing.

- Economic Opportunity: The 18% of the world’s population living in Africa represents a massive untapped market for localized AI models (covering regional languages and contexts), but these models require local AI compute to be commercially viable.

Digital map of Africa highlighting AI compute infrastructure and data center connectivity nodes

The structural barriers to AI compute in Africa

The compute gap is not one problem — it is a compounding stack of four distinct structural barriers. Each one alone would be manageable. Together, they create an environment where building a serious AI product on the continent costs two to four times what it would in North America or Western Europe, if it is achievable at all.

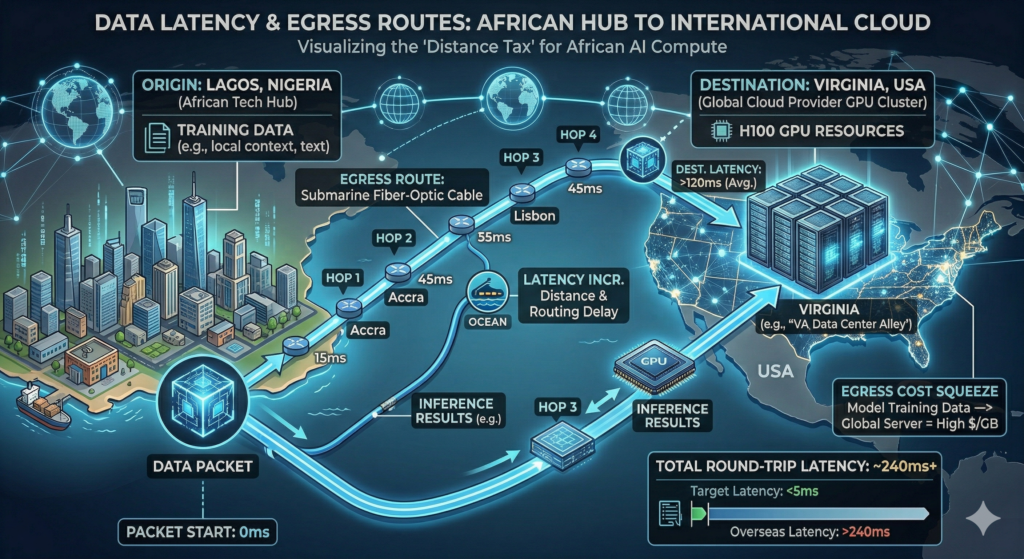

01 The Distance Tax: Latency and Egress Fees

The dominant model for African startups is still “Big Cloud” — AWS, Azure, and Google Cloud. Until very recently, these providers maintained few to no high-density GPU clusters (such as NVIDIA H100 or B200 arrays) physically located on the African continent. The nearest significant clusters are in Europe or the eastern United States.

When a startup in Kenya trains a model on a server in North Virginia (us-east-1), it pays what infrastructure veterans call a “distance tax.” The penalty is not just latency — though round-trip times of 200ms or more across transatlantic subsea cables make real-time inference painful. The real financial hit is egress costs: the per-gigabyte fees cloud providers charge to move data out of their networks.

For a startup working with terabytes of local-language audio, satellite imagery, or financial records, egress bills can exceed the cost of the compute itself.

This is not an edge case. It is the default situation for the vast majority of African AI companies in 2025 and early 2026. And it makes data-intensive model training — particularly on large, unstructured datasets of African languages, healthcare records, or geospatial data — prohibitively expensive at scale.

Data latency and egress routes from African tech hubs to international cloud providers.

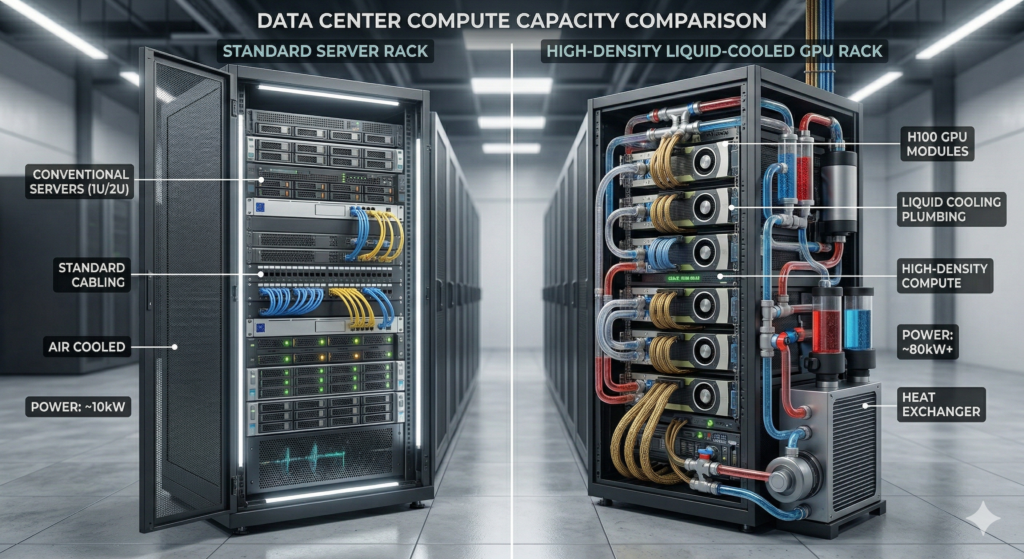

02: The Energy-Density Paradox

Modern AI GPU racks are physically demanding in ways that most existing African data center infrastructure was never designed to handle. A rack of NVIDIA H100s requires between 50kW and 100kW of power density — roughly ten times the draw of a standard web-hosting or banking-workload rack. They also produce enormous amounts of heat, requiring specialised liquid cooling systems to prevent hardware failure.

Most African data centers were built in the 2000s and 2010s to support the continent’s banking and telecommunications boom. They were designed for blade servers running transaction processing workloads. The thermal and electrical demands of AI training clusters are a different order of magnitude. Retrofitting these facilities for GPU compute is expensive and technically complex — often requiring complete redesigns of power distribution and cooling infrastructure.

Compounding this, industrial-grade power in many African markets is either unreliable or extraordinarily expensive. A data center operator who cannot guarantee 99.999% uptime for GPU hardware — because the national grid is unstable — must invest in diesel generators or private solar-plus-battery farms to fill the gap. Every kilowatt-hour of backup generation carries a cost premium of two to five times grid electricity, and those costs are passed directly to the startup buying GPU time.

“For African AI startups, the compute gap is not just about GPUs. It is about the entire infrastructure stack — energy, cooling, fibre, and finance — failing simultaneously.”— Infrastructure analysis, AI Compute Africa landscape, 2026

03 The Hyperscaler Queue and Capital Mispricing

GPU supply remains a global, zero-sum competition. NVIDIA allocates the majority of its highest-end chips — H100s, A100s, and increasingly B200s — to its largest enterprise customers: Microsoft, Meta, Google, Amazon, and Oracle. These companies buy by the tens and hundreds of thousands of units under long-term supply agreements.

An African startup seeking 8 to 16 GPUs for a month-long training run is, in the language of global hardware distribution, a “low-priority customer.” Spot availability is scarce, pricing is volatile, and procurement timelines can stretch to months. This is not unique to Africa — it affects small GPU buyers globally — but it intersects with a second problem that is more specifically African: the “Africa Risk Premium.”

International investors and lenders systematically price African infrastructure projects as higher-risk — often regardless of the underlying business quality.

A Kenyan startup building a local GPU cloud faces borrowing costs two to three percentage points above a comparable European rival. It is also trying to finance hardware that costs upwards of $40,000 per GPU, is priced in dollars, and depreciates fast in an industry where the next generation arrives every two years.

The result is a vicious cycle: local GPU cloud infrastructure is expensive to build. This makes it scarce and forces startups to use expensive overseas compute. Making them less competitive and reinforcing investor scepticism about African AI as an asset class.

04 Currency Volatility vs. Dollar-Denominated Compute

GPU compute is, in practice, a dollar-denominated commodity. AWS, Azure, Google Cloud, CoreWeave, Lambda Labs — every major GPU cloud prices in USD. For a Nigerian startup that raises its seed round in Naira, or a Kenyan company whose revenue is in Kenyan Shillings, this creates a structural financial risk that peers in Silicon Valley simply do not face.

Nigeria’s Naira depreciated by more than 40% against the dollar between 2023 and 2025. When a startup budgets six months of model training at a fixed USD cost, a sudden devaluation of even 20% can effectively destroy 20% of its R&D budget overnight. With no warning and no hedge.

Long-duration training runs — which can stretch across weeks or months for serious foundation model work. It transforms currency risk into a speculation problem that no startup’s finance team is equipped to manage.

This dynamic does not affect all African markets equally. South Africa, with a more liquid currency market and closer integration with European financial systems, has more hedging options. But for the majority of sub-Saharan and North African startups, the dollar-compute problem is a persistent, structural drain on financial efficiency.

Traditional data center server racks and high-density liquid-cooled GPU clusters for AI compute.

Technical Context · Power & Density

A standard 2U server in a co-location facility draws approximately 400W to 600W. A single NVIDIA H100 SXM5 GPU draws 700W on its own. An 8-GPU HGX server — the standard configuration for serious AI training — draws 6.4kW to 10kW per unit. A full rack of AI servers (8 to 10 units) requires 50kW to 100kW of dedicated power, plus equivalent cooling capacity.

Legacy African data centers, built to a standard density of 4kW to 8kW per rack, would need to reduce their rack count by 85–90%. And completely replace their cooling infrastructure to support even a small GPU cluster. This is not a software upgrade — it is a civil engineering project.

The 2026 shift: local GPU infrastructure arrives

The situation is not static. Several forces are beginning to materially change the landscape for AI compute in Africa — some driven by local entrepreneurs and governments, others by the global hyperscalers. Finally recognising the continent as a growth market they cannot ignore.

Key Initiatives Reshaping the Market

Cassava AI Factories

Deploying NVIDIA-powered AI compute clusters in South Africa, Kenya, and Nigeria. By co-locating with existing power and cooling infrastructure and partnering with national governments on sovereign AI mandates, Cassava aims to bring hyperscaler-grade GPU availability to local startup ecosystems at locally-competitive prices. Data stays on the continent, eliminating the egress cost problem entirely.

Atlancis (Servernah)

Launched East Africa’s first AI Factory in Kenya using Open Compute Project (OCP) hardware designs, which significantly reduce capital costs by using commodity components rather than proprietary server architectures. OCP-based GPU clusters cost 20–40% less to build than equivalent proprietary alternatives, potentially making local GPU-as-a-Service economically viable at smaller scale.

Sovereign AI Clouds

Governments across Africa — including Kenya, Nigeria, Rwanda, and Egypt — are beginning to treat GPU compute as a strategic national asset. Comparable in policy terms to electricity generation or road infrastructure. Rwanda’s national AI strategy explicitly frames data sovereignty and local compute as prerequisites for participation in the global AI economy, not optional extras.

Hyperscaler Expansion

Microsoft Azure, Google Cloud, and AWS have all announced or expanded African cloud regions between 2023 and 2026. While these initial regions are primarily focused on general compute and storage rather than GPU clusters, they represent a foundational infrastructure investment that reduces the baseline latency and data sovereignty problems for African startups.

The engineering response: efficiency-first architecture

While the infrastructure investment cycle plays out, African AI startups are not waiting passively. The compute constraint has produced a genuine engineering culture shift. One that may, paradoxically, produce competitive advantages in markets where efficiency matters as much as raw capability.

The dominant response to scarce, expensive GPU time is a design philosophy that Silicon Valley companies — flush with compute budgets — have largely abandoned: efficiency-first engineering. GPU time becomes the scarcest resource in the system. Everything else is optimised around using less of it.

The Three Pillars of Efficiency-First AI

1. Small Language Models (SLMs) and task-specific fine-tuning.

Rather than training or running general-purpose 70B+ parameter models, African AI startups are leading the adoption of compact, and highly-optimized models. The models are fine-tuned for specific domains — customer service in Swahili, agricultural advisory in Hausa, or medical triage in Amharic. A 3B parameter model that outperforms GPT-4 on a specific task at 1% of the inference cost is a better product, not a compromise.

2. Inference at the edge.

Moving model inference closer to the user — to local servers, mobile devices, or edge data centres — avoids the round-trips to overseas GPU clusters that dominate both latency and cost. Africa’s low connectivity in rural and semi-urban markets is a constraint that pushes toward on-device and near-device inference, which happens to align with where AI architecture is heading globally.

3. Local data peering and interconnection.

Using African Internet Exchange Points (IXPs) — now present in more than 40 African cities — to route AI API traffic through local infrastructure rather than the public internet. This eliminates the bulk of egress costs and reduces latency for inference workloads by an order of magnitude on intra-continental routes.

Key Insight · Systems Analysis

Africa’s compute challenge is fundamentally a systems problem, not a hardware problem. The bottleneck is not simply the number of GPUs — it is the absence of a coordinated infrastructure stack. A single H100 cluster in Nairobi delivers limited value if it sits behind a congested submarine cable. It also runs on diesel at four times grid cost, and can only be accessed by startups paying in USD.

Real progress requires synchronised investment across three dimensions that must move together:

- Green energy

- High-density data centers with liquid cooling

- Favorable hardware import and financing policies.

What the compute gap means for African AI products

The product decisions no one talks about

The consequences of the compute divide are not merely financial. They shape which AI products get built and which problems get prioritised. When GPU time costs three times more, startup teams make different product decisions.

Applications requiring less training compute move up the roadmap. Inference-light architectures become the default. Continuous retraining pipelines get cut.

A language model gap too

Foundation models trained on African languages remain almost entirely out of reach for African-headquartered companies. Training one requires massive GPU budgets and months of compute time. The models that underpin African language AI today — covering Yoruba, Swahili, Amharic, Darija, and Zulu — were mostly built by well-funded research teams in North America or Europe. No African startup can currently afford the GPU clusters required to replicate that work.

Modern sustainable data center in Namibia powered by solar energy for local AI model training.

Compute as a sovereignty question

The compute gap, in this sense, is also a representation gap. The training data and optimization objectives of the AI systems Africans use are largely set by institutions with no direct accountability to African users or governments.

Sovereign AI infrastructure is therefore not just an economic question. It determines who gets to define what AI systems value, optimize for, and understand. Governments from Kigali to Cairo are beginning to treat local GPU capacity as a matter of strategic national interest — not merely a business opportunity.

Outlook: The next 24 months

The trajectory for AI compute in Africa through 2026 and 2027 is, cautiously, positive. Hyperscaler regional expansion, locally-built AI factory infrastructure, and government sovereign AI programmes will all push more GPU capacity onto the continent. Raw availability will rise.

But availability is not affordability. The next challenge — already visible — is sustaining the cost once access exists. Currency volatility, high borrowing costs, and dollar-denominated pricing will not be solved by adding more racks to a Nairobi data center.

The startups and investors who understand this distinction are building for it now. Financial instruments, local-currency pricing models, and efficiency practices designed for the African context. They will likely define the first wave of genuinely competitive African AI companies. The compute gap is closing. Who benefits from its closure is still an open question.